Score

It’s way too easy to cheat now

The rise of generative AI poses significant challenges for brand strategy, particularly in industries like food delivery and insurance, where fraudulent practices are becoming increasingly sophisticated. Brands must adapt by implementing robust verification systems and maintaining transparency to protect their reputation and customer trust in an era of digital deception.

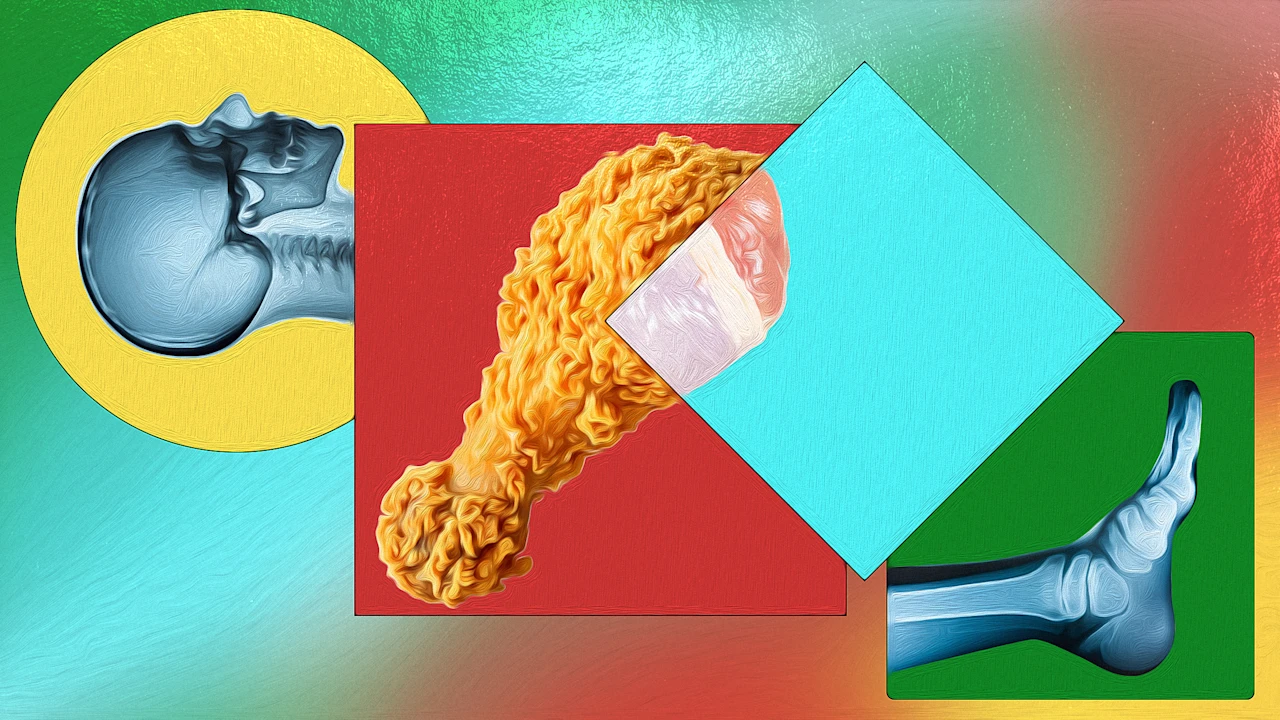

FastCompany: It’s so easy to cheat now. Using generative AI , anyone can get a free meal or product. They can even get free money by scamming the government itself. And, like radiologists have just discovered, they can even cheat doctors and insurance companies by using AI-generated X-rays. According to a new study published by the Radiological Society of North America, most experts can’t distinguish fake fractures from the real thing now. Undetectable insurance fraud is one click away. It’s just the last of a growing list of low-hanging fruit, zero-cost scams made possible with the power of AI. And it’s only going to get worse.

Fake x-rays The Radiological Society of North America’s study subjected 17 global medical specialists from six different countries, some boasting up to 40 years of field experience, to a visual test involving 264 X-rays—half authentic and half synthetic creations created by AI tools like ChatGPT and Stanford’s open-source RoentGen model. When left entirely in the dark about the presence of these artificial images, the physicians only managed to correctly identify the synthetic X-rays 41% of the time.

Even after receiving explicit warnings that fakes were hidden in the batch, their average success rate limped up to 75%, ranging between a dismal 58% and a respectable but imperfect 92%. A doctor’s decades of hands-on experience offered little statistical advantage in catching the deception, the study says, though musculoskeletal experts performed marginally better than their peers. To make matters worse, the large language models responsible for birthing this digital chicanery—including GPT-4o, GPT-5, Gemini 2.5 Pro, and Meta’s Llama 4 Maverick—fared no better as automated detectives, scoring accuracy rates between 57% and 85%.

[Illustration: FC] “Our study demonstrates that these deepfake X-rays are realistic enough to deceive radiologists, the most highly trained medical image specialists, even when they were aware that AI-generated images were present,” noted lead author Dr. Mickael Tordjman. “This creates a high-stakes vulnerability for fraudulent litigation if, for example, a fabricated fracture could be indistinguishable from a real one.

There is also a significant cybersecurity risk if hackers were to gain access to a hospital’s network and inject synthetic images to manipulate patient diagnoses or cause widespread clinical chaos by undermining the fundamental reliability of the digital medical record.” According to Tordjman, AI-generated medical images often look too perfect, with bones that are “overly smooth, spines unnaturally straight, lungs overly symmetrical, blood vessel patterns excessively uniform, and fractures that appear unusually clean and consistent, often limited to one side of the bone.” But that’s just yesterday’s crop of tools.

Like AI-generated video , AI will make these X-rays absolutely perfect and undetectable soon. It’s the nature of the ever evolving AI beast. To fight this, experts are demanding invisible watermarks and cryptographic signatures directly linked to the technician capturing the scan, effectively acting like a mathematical seal of authenticity that proves a human body was actually in the room. Shallowfakes and raw deals Fraudulent x-rays are a serious example of the more quotidian truth-bending that’s already happening.

Article truncated for readability. Read the full piece →

The article addresses the critical challenge of generative AI in brand strategy, highlighting the need for brands to adapt to maintain trust, which is highly relevant and significant in today's digital landscape.